GPU621/History of Parallel Computing and Multi-core Systems

GPU621/DPS921 | Participants | Groups and Projects | Resources | Glossary

Contents

[hide]- 1 History of Parallel Computing and Multi-core Systems

History of Parallel Computing and Multi-core Systems

We will be looking into the history and evolution in parallel computing by focusing on three distinct timelines:

1) The earliest developments of multi-core systems and how it gave rise to the realization of parallel programming

2) How chip makers marketed this new frontier in computing, specifically towards enterprise businesses being the primary target audience

3) How quickly it gained traction and when the two semiconductor giants Intel and AMD decided to introduce multi-core processors to domestic users, thus making parallel computing more widely available

From each of these timelines, we will be inspecting certain key events and how they impacted other events in their progression. This will help us understand how parallel computing came into fruition, its role and impact in many industries today, and what the future may hold going forward.

Group Members

Omri Golan

Patrick Keating

Yuka Sadaoka

Progress

Update 1 (11/12/2020):

-> Basic definition of parallel computing

-> Research on limitation of single-core systems and subsequent advent of multi-core with respect to parallel computing capabilities

-> Earliest development and usage of parallel computing

-> History and development of supercomputers and their parallel computing nature (incl. modern supercomputers -> world's fastest supercomputer)

Update 2 (11/27/2020):

-> Add new content(Demise of Single-Core and Rise of Multi-Core Systems)

Update 3 (11/29/2020):

-> Add new content(Domination of Two Semiconductor Giants Intel and AMD In Multi-core Processor Development)

Update 4 (11/30/2020):

-> Earliest Application of Parallel Computing

-> Added graphics to Parallel Programming vs. Concurrent Programming

-> Parallel Computing in Supercomputers and HPC

-> Added References section

Update 5 (12/02/2020):

->Added how multicore systems were marketed

Update 6 (12/03/2020):

->Updated references and added image

Preface

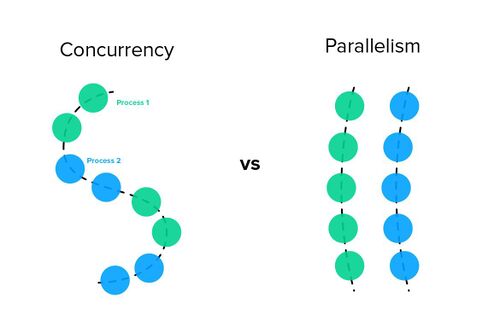

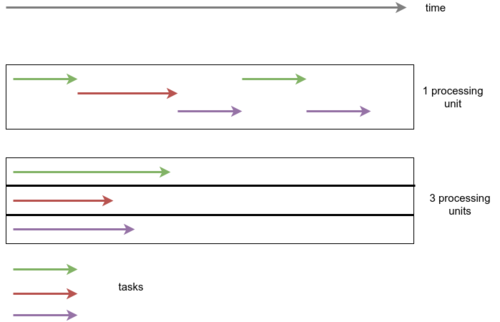

Parallel Programming vs. Concurrent Programming

Parallel computing is the idea that large problems can be split into smaller tasks, and these tasks are independent of each other running simultaneously on more than one processor. This concept is different from concurrent programming, which is the composition of multiple processes that may begin and end at different times, but are managed by the host system’s task scheduler which frequently switches between them. This gives off the illusion of multi-tasking as multiple tasks are in progress on a single processor. Concurrent computing can occur on both single and multi-core processors, whereas parallel computing takes advantage of distributing the workload across multiple physical processors. Thus, parallel computing is hardware-dependent.

Demise of Single-Core and Rise of Multi-Core Systems

Transition from Single to Multi-Core

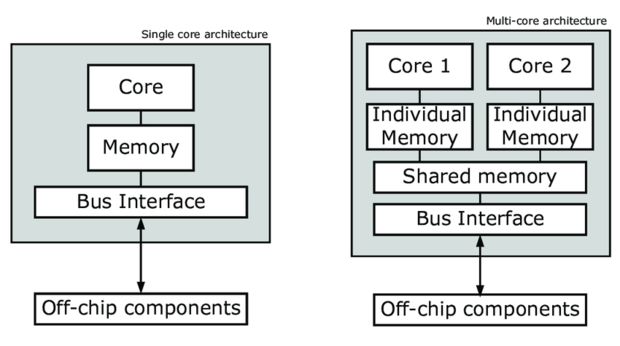

The transition from single to multi-core systems came from the need to address the limitations of manufacturing technologies for single-core systems. Single-core systems suffered by several limiting factors including:

- individual transistor size

- multiple transistors combined together in a certain organized structure form logic gates: AND, OR, NOT, NOR, XOR, XNOR, NAND

- logic gates are the foundation of all integrated circuits (ICs) and enable different complexities of logic functions to be performed

- multiple transistors combined together in a certain organized structure form logic gates: AND, OR, NOT, NOR, XOR, XNOR, NAND

- physical limits in the design of integrated circuits which caused significant heat dissipation

- heat dissipation issue is attributed to the power wall by which the processor consumed more power to enable higher operating frequencies, thus naturally increasing the heat being generated

- synchronization issues with coherency of data.

A common metric of measurement in the number of instructions a processor can execute simultaneously for a given program is called Instruction-Level Parallelism (ILP). In the case of single-core processors, some ILP techniques were used to improve performance such as superscalar pipelining, speculative execution, and out-of-order execution. The superscalar ILP technique enables the processor to execute multiple instruction pipelines concurrently within a single clock cycle, but it along with the two other techniques were not suitable for many applications as the number of instructions that can be run simultaneously for a specific program may vary. Such issues with instruction-level parallelism were predominantly dictated by the disparity between the speed by which the processor operated and the access latency of system memory, which costed the processor many cycles by having to stall and wait for the fetch operation from system memory to complete.

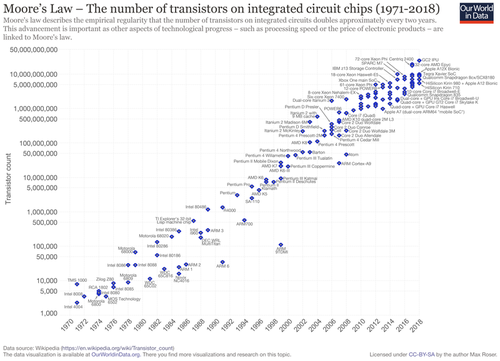

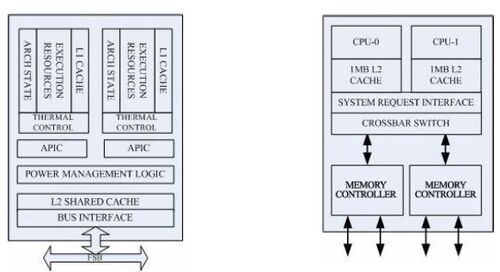

As manufacturing processes evolved in accordance with Moore’s Law which saw the size of a transistor shrink, it allowed for the number of transistors packed onto a single processor die (the physical silicon chip itself) to double roughly every two years. This enabled the available space on a processor die to grow, allowing more cores to fit on it than before. This led to an increased demand in thread-level parallelism (TLP) which many applications benefitted from and were better suited for. The addition of multiple cores on a processor also increased the system's overall parallel computing capabilities.

Usage of Parallel Computing and HPC

Earliest Applications of Parallel Computing

The idea and application of parallel computing goes back before the time multi-core processors were developed, between the 1960s and 1970s where it was heavily utilized in industries that relied on large investments for R&D such as aircraft design and defense, as well as modelling scientific and engineering problems. Parallel computing gave a significant edge to regular single-threaded programming by enabling more computing power to be directed at compute-intensive application. This enabled complex scientific and engineering simulations and computations to take full advantage of the scaled processing power of parallel systems. The systems allowed simulations for these problems to be accurately modelled and enabled every critical interaction between dynamic events to be tracked and recorded. The code for these simulations employed various parallel computing techniques from Flynn’s Taxonomy MIMD architecture such as SPMD and MPMD.

Today, almost every industry has found at least one or more practical applications for parallel computing, and the world is witnessing its evolving capabilities through one dominant contender: Artificial Intelligence, which has made popular and demanding use of GPUs to build deep learning neural networks. The design and implementation of either parallelizing an existing serial program or writing one from scratch with the parallelization baked in from the start, used to be a very tedious, iterative, and error-prone process. Identification of which parts of a program could be parallelized and then implementing them was complex and time-consuming. Over time, various tools have been developed to ease and shorten the manual process such as parallelizing compilers, pre-processor directives (like OpenMP’s), and compiler flags (like ones used at the terminal or command prompt). The manual process of analyzing potential opportunities for parallelization is still an important step towards determining how much of a performance boost can be achieved and if it is worth investing time to implement it.

Parallel Computing in Supercomputers and HPC

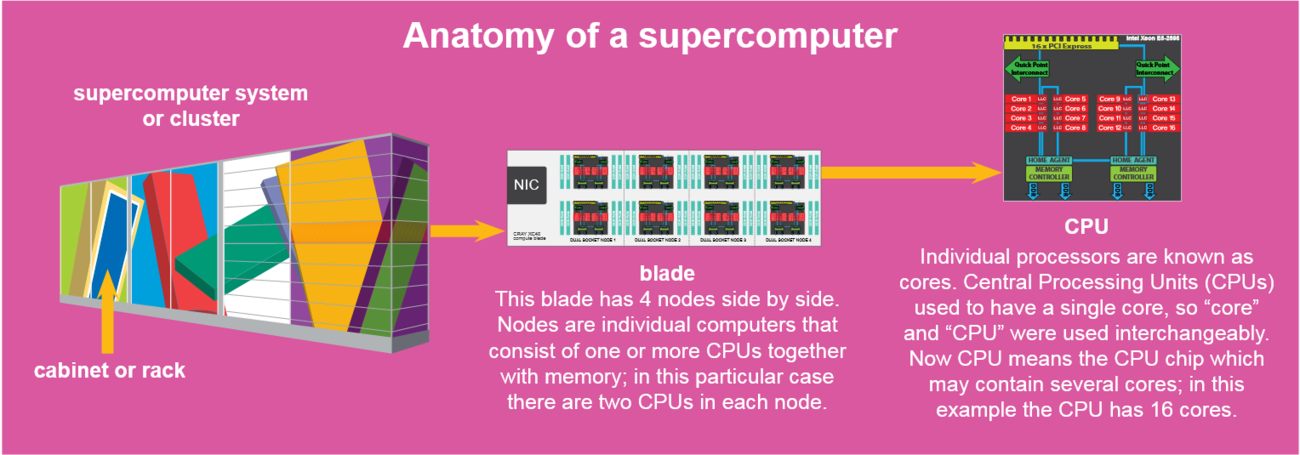

Parallel computing used to be largely confined to High Performance Computing (HPC), system architectures designed to handle high speed and density calculations. When thinking of HPC, supercomputers are generally the types of machines that come to mind. Parallel programming became a significant sub-field in computer science by the late 1960s, and most of the compute-intensive processing was happening on supercomputers that employed multiple physical CPUs on nodes with their respective memory, sitting in blades (container/case for the nodes) within racks/cabinets, and networked together in a hybrid-memory model.

Source: https://www.kth.se/polopoly_fs/1.764059.1600688458!/PDC_Pub_posters_20200101_supercomputer_basics_lres.pdf

The first supercomputer was designed and developed by Seymour Cray, an electrical engineer who was deemed the father of supercomputing. He initially worked for a company called Control Data Corporation where he worked on the CDC 6600 which was the first and fastest supercomputer between 1964 and 1969. He left in 1972 to form Cray Research and in 1975, announced his own supercomputer, the Cray-1. It was one of the most successful supercomputer in history, being the first to successfully implement the vector processor design, and was used until the late 1980s.

Source: http://www.extremetech.com/wp-content/uploads/2014/10/cray-1-nersc-disassembled.jpg

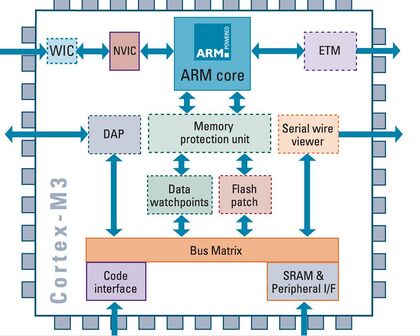

Fast forward to the 2000s, which saw a huge boom in the number of processors working in parallel, with numbers upward in the tens of thousands. Such examples in the evolution in parallel computing, High Performance Computing, and multi-core systems include the fastest supercomputer today, being Japan's Fugaku which was jointly developed by RIKEN and Fujitsu. It boasts an impressive 7.3 million cores, all of which are, for the first time in a supercomputer, ARM-based. It uses a hybrid-memory model and a new network architecture that provides higher cohesion among all the nodes. The success of the new system is a radical paradigm-shift from the departure of traditional supercomputing towards that of ARM-powered systems. It is also proof the designers wanted to highlight that HPC still has much room for improvement and innovation.

Multi-core products in the commercial market

Multi-core in server systems

In the early 2000's, the progress of single-core processor development was starting to diminish. As mentioned above, they faced issues such as high power consumption, which also resulted in excessive heat. There were many companies running power hungry servers, who wanted to lower the cost of operations from electricity and cooling. This is where IBM, a top company in the workplace server market today, made a big name for themselves.

In 2001, IBM was the first company to release a dual-core processor for UNIX systems on the market. They called it the IBM POWER4, and was released as part of their eServer lineup as the pSeries IBM Regatta. Regatta was able to put IBM in the spotlight for data centers and large enterprises and was advertised as having "twice the performance for half the cost". They iterated more on the POWER series of processors, and in 2010, expanded the number of cores available from 2 to 8 with the release of the POWER7.

The invention and implementation of multi-core was crucial to IBM’s success as we see today. During the development of the POWER4 in the mid 90s, IBM had a market share of 15 percent, with other companies such as Sun and HP taking a large percentage of the market. By 2010, with the release of multiple iterations of their multi-core products, they had become a leader in the market with a share of 45 percent.

“The analyst community told us it literally blew their socks off. In a very short time we went from last place to industry leader.” – Carrie Altieri, vice president of communications for IBM’s Systems Technology Group

Desktop multi core systems

While IBM was dominating the market for server CPUs, there was still a hole in the market for integrating multicore into desktop computers. In may of 2005, AMD was the first company to release a dual-core desktop CPU, the Athlon 64 x2. With the cheapest in the line being $500 and the most powerful being $1000, It did not quite match IBM’s “twice the performance for half the cost”. However, the new product was still another large innovation in the industry by AMD, and a top competitor for the highest power CPU on the market.

Domination of Two Semiconductor Giants Intel and AMD In Multi-core Processor Development

Early Product Launches

After the initial multicore processor introduction, on April 18, 2005 Intel announced that computer manufacturers Alienware, Dell and Velocity Micro started selling desktop PCs and workstations based on Intel's first dual-core processor-based platform. This dual-core processor-based systems were trying to attract computer hobbyists and entertainment enthusiasts.

The next month May 2005, AMD released Athlon 64 X2 which was the first dual core desktop processor series and Turion processor which were designed for low power consumption mobile processor segments. AMD was intending for the Turion to compete against Intel’s mobile processors, initially the Pentinum M and later the Intel Core and Intel Core 2 processors.

Intel released Core series was also the first Intel processor used as the main CPU in an Apple Macintosh computer on January 2006. The Core Duo was the CPU for the first-generation MacBook Pro, while the Core Solo appeared in Apple's Mac Mini line. Core Duo signified the beginning of Apple's shift to Intel processors across the entire Mac line.

The successor to Core is the mobile version of the Intel Core 2 line of processors using cores based upon the Intel Core microarchitecture, released on July 27, 2006. The release of the mobile version of Intel Core 2 marked the reunification of Intel's desktop and mobile product lines as Core 2 processors were released for desktops and notebooks. The Core 2 architecture hit a wide range of devices, but Intel needed to produce something less expensive for the ultra-low-budget and portable markets. This led to the creation of Intel's Atom between 2008 and 2009, which used a 26mm2 die, less than one-fourth the size of the first Core 2 dies.

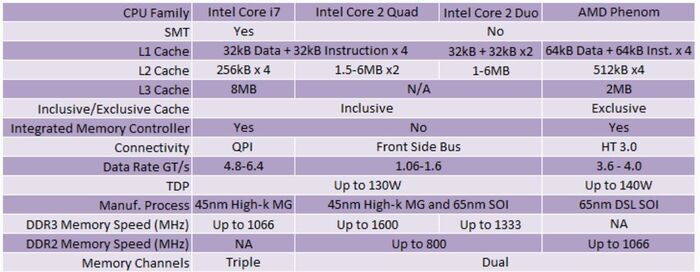

Meanwhile, AMD launched Phenom dual-, triple- and quad-core versions to target a budget desktop processor market. AMD considered the quad core Phenoms to be the first "true" quad core design, as these processors were a monolithic multi-core design meaning all cores on the same silicon die, unlike Intel's Core 2 Quad series which were a multi-chip module (MCM) design. The processors were on the Socket AM2+ platform.

Microsoft and Intel announced to invest $20 million in Parallel Computing Research over the next 5 years on March 8 2008. University of California, Berkeley and the University of Illinois at Urbana-Champaign (UIUC) were part of the research. The dual and quad core multiprocessor was increasingly becoming common at the time and the giant tech corporations wanted to support the parallel computing researchers who were developing better ways of writing applications that could take advantage of multicore processors.

On August 8 2008, Intel announced the Nehalem microprocessor, which represents the new Core i7 brand of high-end microprocessors to replace the Core 2 Duo microprocessors. This brand targeted the business and high-end consumer markets for both desktop and laptop computers.

Later during the year Intel planned to add more chips into the Intel Celeron E1000 dual-core lineup, creating a comprehensive family of affordable chips with two processing engines, additionally to target cost-effective desktops. The launch of low-cost dual-core Intel Celeron E1000-series processors would caused the chip giant’s rival AMD to either waterfall prices of its entry-level single-core AMD Athlon LE and AMD Sempron chips, or to introduce value dual-core processors as well and reconsider pricing of single-core offerings.

With the processor market in a highly competitive state, Intel had kept pushing their advantage. Therefore, it reworked the Core architecture to create Nehalem, which adds numerous enhancements. Intel released Core i3, i5, and i7. Core i7 was officially launched on November 17, 2008 as a family of three quad-core processor desktop models, further models started appearing throughout 2009. Intel intended the Core i3 as the new low-end of the performance processor line from Intel, following the retirement of the Core 2 brand.

AMD vs Intel in 2010s

Intel continued to grow its market share with the tick-tock improvement cycle of its Core series of microprocessors since the 2006 release of Core microarchitecture and continuing for the next ten years. Thus, AMD has been almost completely absent for many years due to AMD’s primary competitor Intel. However, AMD officially announced a new series of processors, named "Ryzen", during its New Horizon summit on December 13, 2016 and introduced Ryzen 1000 series processors in February 2017, featuring up to 8 cores and 16 threads, which launched on March 2, 2017. Ryzen was released as the return of AMD to the high-end CPU market, offering a product stack able to compete with Intel at every level. Having more processing cores, Ryzen processors offer greater multi-threaded performance at the same price point relative to Intel's Core processors. Since the release of Ryzen, AMD's CPU market share has increased while Intel appears to have stagnated.

Multi-core Processors Today

Today, multicore processor makers including AMD and Intel have been continuously improving their processors to satisfy the high demand of consumers. As of today(November, 2020), Intel and AMD both made announcements to release newer version of its core brand; 11th Gen Intel Core processors with Intel Iris Xe graphics and Ryzen 5000 Series desktop processor lineup powered by the new “Zen 3” architecture respectively.

Currently in 2020 the global multi-core processors market has been significantly more competitive. Today’s market has been segmented into dual-core processors, quad-core processors, octa-core processors, and hexa-core processors. It’s apparent the increasing advancement in high-performance computing, graphics and visualization technologies is anticipated to boost the growth of the multi-core processor.

AMD vs Intel with OpenMP

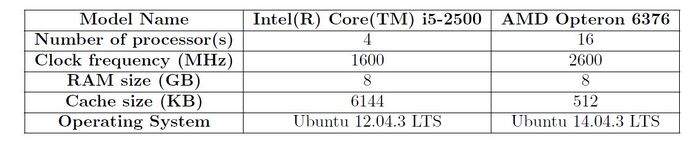

Comparison of OpenMP performance using AMD and Intel multicore processors was conducted in Telcom University in 2018. This study simulated breaking waves by using Navier-Stokes equation which was parallelized with OpenMP. The below table shows the specifications of AMD and Intel multicore processors used to perform the comparison.

According to the execution time in serial and parallel, the execution time of the Intel processor is far better than the AMD one. The Intel processor only needs half the time the AMD processor needed to run the simulation. However, the speedup and efficiency of the AMD processor was slightly higher than the Intel processor in general. Note that the execution time using Intel is observed higher than AMD, since from the first table,cache size of Intel is given as larger than AMD. The size of cache in Intel is proportional to the size of the total particles in simulation, thus high speed of memory interaction is obtained.

References

MULTI-CORE PROCESSORS — THE NEXT EVOLUTION IN COMPUTING. (n.d.). Retrieved November 12, 2020, from http://static.highspeedbackbone.net/pdf/AMD_Athlon_Multi-Core_Processor_Article.pdf

Aase, S. (2010, October). Driving a Powerful Future. IBM Systems Magazine, 34-39.

Barney, B. (2020, November 18). Introduction to Parallel Computing. Retrieved November 18, 2020, from https://computing.llnl.gov/tutorials/parallel_comp/

A very short history of parallel computing. (n.d.). Retrieved November 18, 2020, from https://subscription.packtpub.com/book/hardware_and_creative/9781783286195/1/ch01lvl1sec08/a-very-short-history-of-parallel-computing

What is Parallel Computing? Definition and FAQs. (n.d.). Retrieved November 12, 2020, from https://www.omnisci.com/technical-glossary/parallel-computing

Multi-core processor. (2020, November 18). Retrieved November 12, 2020, from https://en.wikipedia.org/wiki/Multi-core_processor

Instruction-level parallelism. (2020, September 23). Retrieved November 12, 2020, from https://en.wikipedia.org/wiki/Instruction-level_parallelism

Start, C. (2013, July 18). Multi-core Systems. Retrieved November 12, 2020, from https://www.cs.uaf.edu/2008/fall/cs441/proj1/fswcs/

Kaufmann, M., Koniges, A. E., Jette, M. A., Eder, D. C., Cahir, M., Moench, R., . . . Brooks, J. (n.d.). Part 1: The Parallel Computing Environment. Retrieved November 12, 2020, from http://wayback.cecm.sfu.ca/PSG/book/intro.html

Jackson, K. (2020, June 23). The 5 fastest supercomputers in the world (1064822629 811109711 A. Alering, Ed.). Retrieved November 12, 2020, from https://sciencenode.org/feature/the-5-fastest-supercomputers-in-the-world.php

POWER4. (2020, October 19). Retrieved November 12, 2020, from https://en.wikipedia.org/wiki/POWER4

Power 4: The First Multi-Core, 1GHz Processor. (2011, August 05). Retrieved December 01, 2020, from https://www.ibm.com/ibm/history/ibm100/us/en/icons/power4/

No, J., Choudhary, A., Huang, W., Tafti, D., Resch, M., Gabriel, E., . . . Pressel, D. (n.d.). Parallel Computer. Retrieved November 18, 2020, from https://www.sciencedirect.com/topics/computer-science/parallel-computer

Wang, W. (n.d.). The Limitations of Instruction-Level Parallelism and Thread-Level Parallelism. Retrieved November 30, 2020, from https://wwang.github.io/teaching/CS5513_Fall19/lectures/ILP_Limitation.pdf

Wasson, S. (2005, May 09). AMD's Athlon 64 X2 processors. Retrieved December 01, 2020, from https://techreport.com/review/8295/amds-athlon-64-x2-processors/

Cray-1. (2020, November 23). Retrieved December 02, 2020, from https://en.wikipedia.org/wiki/Cray-1

Brodkin, J. (2008, March 20). Microsoft, Intel pour $20 million into parallel computing research. Retrieved December 03, 2020, from https://www.networkworld.com/article/2285046/microsoft--intel-pour--20-million-into-parallel-computing-research.html

Intel Core. (2020, November 28). Retrieved December 03, 2020, from https://en.wikipedia.org/wiki/Intel_Core

AMD Phenom. (2020, January 30). Retrieved December 03, 2020, from https://en.wikipedia.org/wiki/AMD_Phenom

X-bit labs. (n.d.). Retrieved December 03, 2020, from https://web.archive.org/web/20071104025126/http://www.xbitlabs.com/news/cpu/display/20071011171900.html

Sexton, M. (2018, September 08). The History Of Intel CPUs: Updated! Retrieved December 03, 2020, from https://www.tomshardware.com/picturestory/710-history-of-intel-cpus-3.html

Multi-core Introduction. (n.d.). Retrieved December 03, 2020, from https://software.intel.com/content/www/us/en/develop/articles/multi-core-introduction.html

Multi-Core Processors Market by Type, Growth and Analysis – 2025: MRFR. (n.d.). Retrieved December 03, 2020, from https://www.marketresearchfuture.com/reports/multi-core-processors-market-8248

Multi-core processor. (2020, November 18). Retrieved December 03, 2020, from https://en.wikipedia.org/wiki/Multi-core_processor

M N A Alamsyah et al 2018 J. Phys.: Conf. Ser.971 012022 from https://iopscience.iop.org/article/10.1088/1742-6596/971/1/012022/pdf

Swinburne, R. (2008, November 03). Intel Core i7 - Nehalem Architecture Dive. Retrieved December 03, 2020, from https://bit-tech.net/reviews/tech/cpus/intel-core-i7-nehalem-architecture-dive/1/