Difference between revisions of "Three-Star"

(→LZW Data Compression Algorithm) |

(→LZW Data Compression Algorithm) |

||

| Line 167: | Line 167: | ||

for (int s = 1; s <= n/2; s*=2) | for (int s = 1; s <= n/2; s*=2) | ||

| − | + | for(int j = 0; j < n; j +=2 * s) | |

| − | + | a[j] += a[j + s]; | |

As such, the major hotspot in this function is the second for loop. This is especially true since the file might be very large and we may be dealing with millions of characters! The one thing we need to worry about is that order does seem to matter for the second for loop. | As such, the major hotspot in this function is the second for loop. This is especially true since the file might be very large and we may be dealing with millions of characters! The one thing we need to worry about is that order does seem to matter for the second for loop. | ||

Revision as of 02:18, 8 April 2018

GPU610/DPS915 | Student List | Group and Project Index | Student Resources | Glossary

Contents

Three-Star

Team Members

Progress

Assignment 1

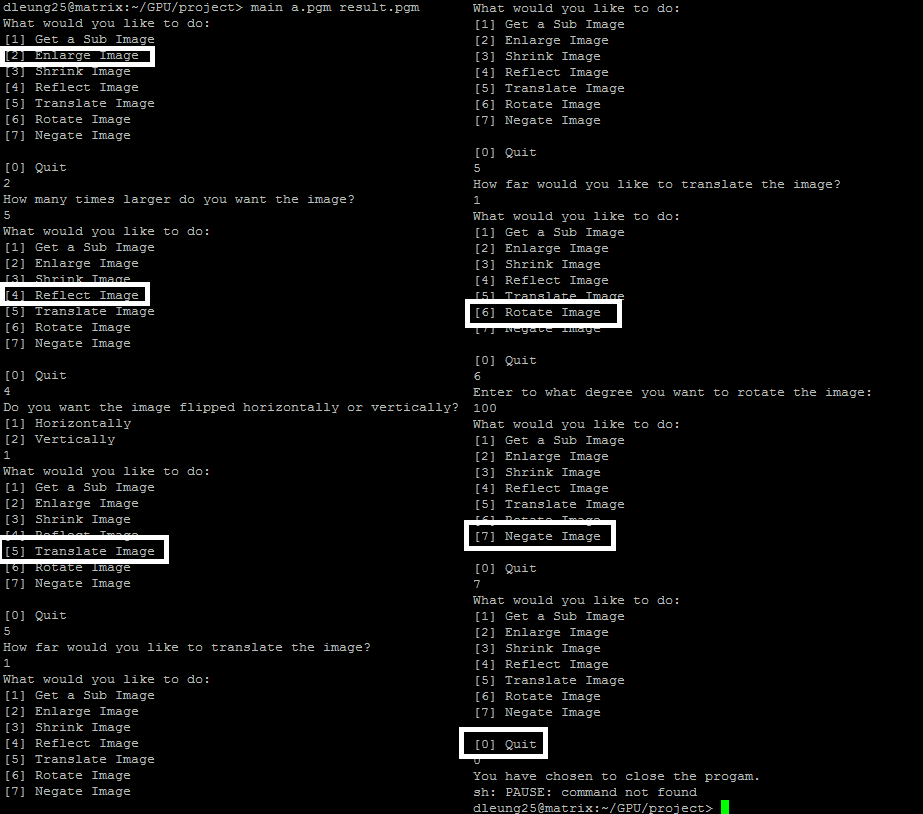

Image Profiling

Chosen to profile image profiling as shown here: http://www.dreamincode.net/forums/topic/76816-image-processing-tutorial/ , using the sample program files (main/image.h/image.cpp)

pulled PGM sample files from here: https://userpages.umbc.edu/~rostamia/2003-09-math625/images.html

file sizes being 512x512, about 262 KB each file

Compiled to produce a flat profile and a call graph

>g++ -g -O2 -pg -o main main.cpp

>main a.pgm result.pgm

Note: Enlarged image by max permitted by program (5) to get more viewable results, since the profile without enlarging it produces non-significant results

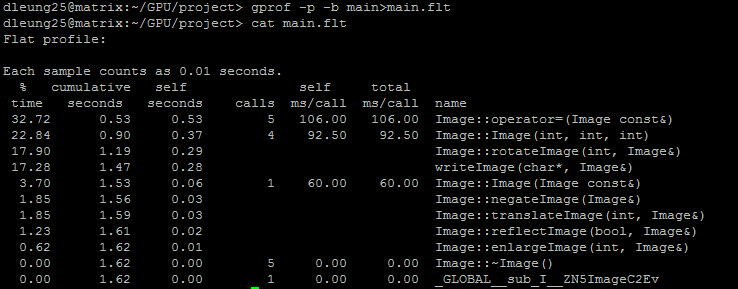

The results of the flat profile:

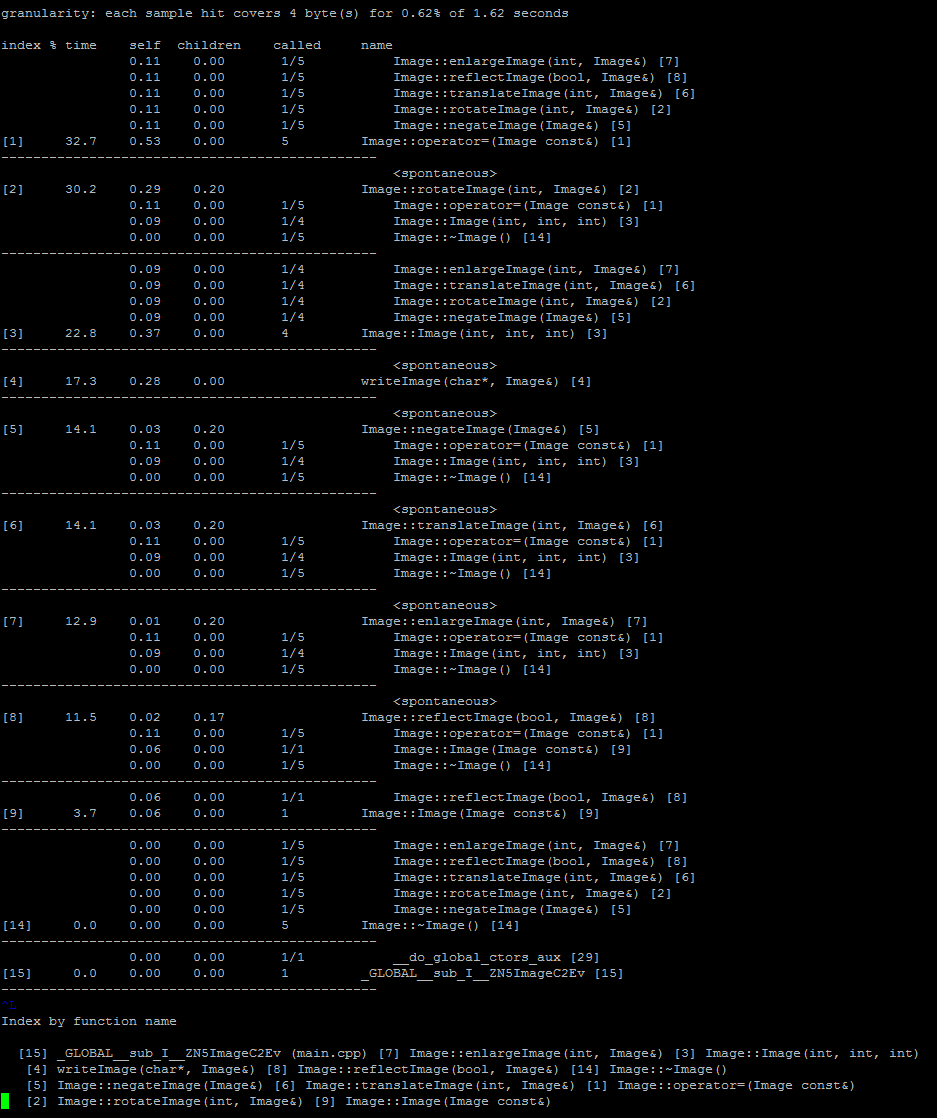

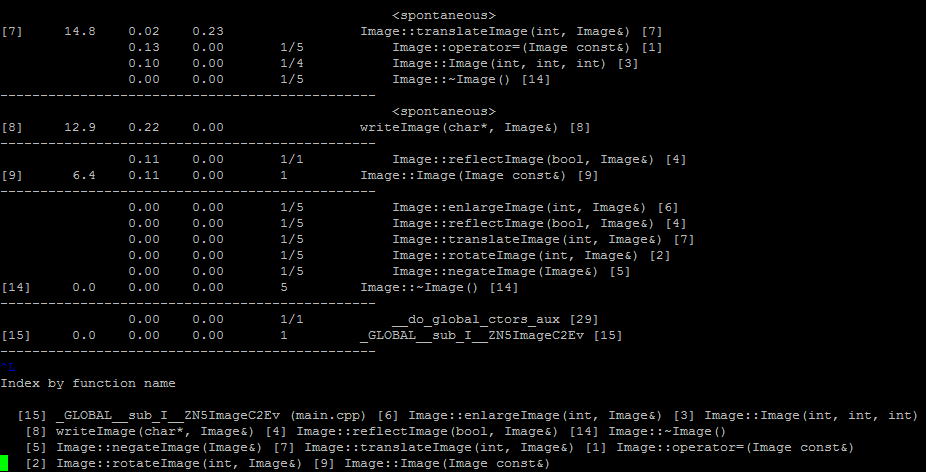

The results of the call graph

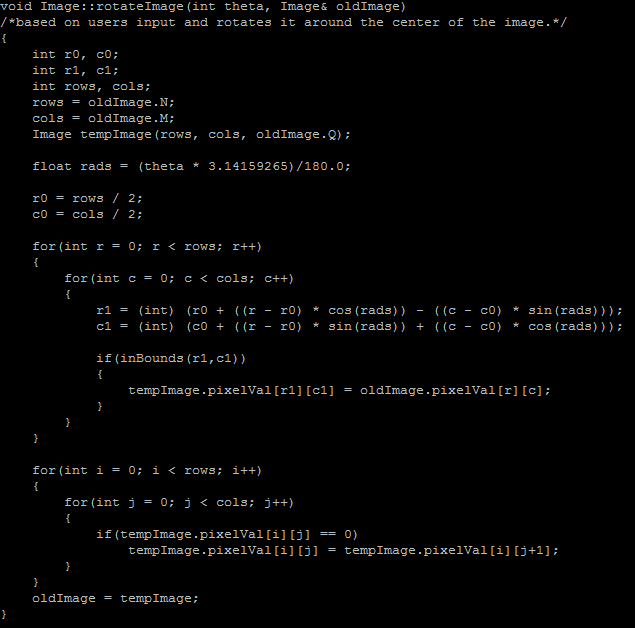

Rotate image function is one of the longer running functions and looks like it has potential for parallelization.

LZW Data Compression Algorithm

Timothy Moy profiled.

Original algorithm: https://codereview.stackexchange.com/questions/86543/simple-lzw-compression-algorithm

Raw Flat profile (50Mb Test file for compression):

Each sample counts as 0.01 seconds.

% cumulative self self total time seconds seconds calls us/call us/call name 35.52 4.23 4.23 compress(std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, int, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >) 27.54 7.51 3.28 102062309 0.03 0.03 std::_Hashtable<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, int>, std::allocator<std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, int> >, std::__detail::_Select1st, std::equal_to<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::hash<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::__detail::_Mod_range_hashing, std::__detail::_Default_ranged_hash, std::__detail::_Prime_rehash_policy, std::__detail::_Hashtable_traits<true, false, true> >::_M_find_before_node(unsigned int, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, unsigned int) const 20.15 9.91 2.40 204116423 0.01 0.01 std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >::_M_replace_aux(unsigned int, unsigned int, unsigned int, char) 8.23 10.89 0.98 49629412 0.02 0.05 std::__detail::_Map_base<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, int>, std::allocator<std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, int> >, std::__detail::_Select1st, std::equal_to<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::hash<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::__detail::_Mod_range_hashing, std::__detail::_Default_ranged_hash, std::__detail::_Prime_rehash_policy, std::__detail::_Hashtable_traits<true, false, true>, true>::operator[](std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&) 4.28 11.40 0.51 52428800 0.01 0.01 std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >::_M_assign(std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&) 3.02 11.76 0.36 52436762 0.01 0.01 show_usage() 1.26 11.91 0.15 _Z22convert_char_to_stringB5cxx11PKci 0.00 11.91 0.00 4097 0.00 0.00 std::_Hashtable<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, int>, std::allocator<std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, int> >, std::__detail::_Select1st, std::equal_to<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::hash<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::__detail::_Mod_range_hashing, std::__detail::_Default_ranged_hash, std::__detail::_Prime_rehash_policy, std::__detail::_Hashtable_traits<true, false, true> >::_M_insert_unique_node(unsigned int, unsigned int, std::__detail::_Hash_node<std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, int>, true>*) 0.00 11.91 0.00 22 0.00 0.01 std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >::_M_mutate(unsigned int, unsigned int, char const*, unsigned int) 0.00 11.91 0.00 1 0.00 0.00 _GLOBAL__sub_I__Z18convert_int_to_binB5cxx11i 0.00 11.91 0.00 1 0.00 28.13 std::_Hashtable<std::__cxx11::basic_

Summarized Flat Profile (50Mb Test file for compression):

% cumulative self self total time seconds seconds calls us/call us/call name 35.52 4.23 4.23 compress() 27.54 7.51 3.28 102062309 0.03 0.03 std::_Hashtable 20.15 9.91 2.40 204116423 0.01 0.01 std::__cxx11::basic_string 8.23 10.89 0.98 49629412 0.02 0.05 std::__detail::_Map_base 4.28 11.40 0.51 52428800 0.01 0.01 std::__cxx11::basic_string 3.02 11.76 0.36 52436762 0.01 0.01 show_usage() 1.26 11.91 0.15 _Z22convert_char_to_stringB5cxx11PKci 0.00 11.91 0.00 4097 0.00 0.00 std::_Hashtable 0.00 11.91 0.00 22 0.00 0.01 std::__cxx11::basic_string 0.00 11.91 0.00 1 0.00 0.00 _GLOBAL__sub_I__Z18convert_int_to_binB5cxx11i 0.00 11.91 0.00 1 0.00 28.13 std::_Hashtable<std::__cxx11::basic_

Note how the compress() function takes up the largest amount of time (over one third), then the other functions which take up over 10% of the time are library functions. It is highly unlikely we could parallelize the library functions. The other functions that take up under 10% of the time will probably not give enough improvement in time to make a significant impact.

Thus, the function we should focus on is the compress function.

Summary of Compress() Profiles

| Size (MB) | Compress() time in seconds |

|---|---|

| 10 | 0.96 |

| 15 | 1.35 |

| 20 | 1.8 |

| 25 | 2.14 |

| 30 | 2.64 |

| 35 | 3.16 |

| 40 | 3.45 |

| 45 | 4.24 |

| 50 | 4.23 |

The compress function source code:

void compress(string input, int size, string filename) {

unordered_map<string, int> compress_dictionary(MAX_DEF);

//Dictionary initializing with ASCII

for ( int unsigned i = 0 ; i < 256 ; i++ ){

compress_dictionary[string(1,i)] = i;

}

string current_string;

unsigned int code;

unsigned int next_code = 256;

//Output file for compressed data

ofstream outputFile;

outputFile.open(filename + ".lzw");

// Possible area for improvement via reduction

for(char& c: input){

current_string = current_string + c;

if ( compress_dictionary.find(current_string) ==compress_dictionary.end() ){

if (next_code <= MAX_DEF)

compress_dictionary.insert(make_pair(current_string, next_code++));

current_string.erase(current_string.size()-1);

outputFile << convert_int_to_bin(compress_dictionary[current_string]);

current_string = c;

}

}

if (current_string.size())

outputFile << convert_int_to_bin(compress_dictionary[current_string]);

outputFile.close();

}

There are two loops which show possibility of parallelization:

for ( int unsigned i = 0 ; i < 256 ; i++ ){

compress_dictionary[string(1,i)] = i;

}

and

for(char& c: input){

current_string = current_string + c; // Possible area for improvement via reduction

if ( compress_dictionary.find(current_string) ==compress_dictionary.end() ){

if (next_code <= MAX_DEF)

compress_dictionary.insert(make_pair(current_string, next_code++));

current_string.erase(current_string.size()-1);

outputFile << convert_int_to_bin(compress_dictionary[current_string]);

current_string = c;

}

}

The first for loop is constant and probably won't show much improvement if we parallelize it.

Note the comment above the second for loop notes we can do something like this:

for (int i = 1; i < n; i+=) a[0] += a[i];

changed to

for (int s = 1; s <= n/2; s*=2)

for(int j = 0; j < n; j +=2 * s)

a[j] += a[j + s];

As such, the major hotspot in this function is the second for loop. This is especially true since the file might be very large and we may be dealing with millions of characters! The one thing we need to worry about is that order does seem to matter for the second for loop.

Conclusion

We decided to go with image profiling.

There are some possible issues with working with the simple-lzw-compression-algorithm and CUDA. You cannot use the C++ string type in a kernel because CUDA does not include a device version of the C++ String library that would be able run on the GPU. Even if it was possible to use string in a kernel, it's not something you would want to do because string handles memory dynamically, which would be likely to be slow.

Char explanation (replace tmr) - https://stackoverflow.com/questions/26993351/is-there-a-penalty-to-using-char-variables-in-cuda-kernels?utm_medium=organic&utm_source=google_rich_qa&utm_campaign=google_rich_qa