Difference between revisions of "Happy Valley"

(→Assignment 1) |

(→LZSS) |

||

| Line 10: | Line 10: | ||

=== Assignment 1 === | === Assignment 1 === | ||

| − | === LZSS === | + | ==== LZSS ==== |

[https://en.wikipedia.org/wiki/Lempel%E2%80%93Ziv%E2%80%93Storer%E2%80%93Szymanski LZSS] (Lempel–Ziv–Storer–Szymanski) is a compression algorithm that belongs to LZ77 family. It attempts to replace a string of symbols with a reference to a dictionary location of the same string. | [https://en.wikipedia.org/wiki/Lempel%E2%80%93Ziv%E2%80%93Storer%E2%80%93Szymanski LZSS] (Lempel–Ziv–Storer–Szymanski) is a compression algorithm that belongs to LZ77 family. It attempts to replace a string of symbols with a reference to a dictionary location of the same string. | ||

| Line 63: | Line 63: | ||

0.07 27.69 0.02 1 0.02 0.02 init_tree</pre> | 0.07 27.69 0.02 1 0.02 0.02 init_tree</pre> | ||

| − | <pre>/////////////////// | + | <pre>/////////////////// 250MB FILE /////////////////// |

[obelavina@localhost gpu610]$ cat 250M.flt | [obelavina@localhost gpu610]$ cat 250M.flt | ||

Flat profile: | Flat profile: | ||

| Line 123: | Line 123: | ||

|} | |} | ||

| − | [[File: | + | [[File:Lzss_perf.png]] |

'''Analysis''' | '''Analysis''' | ||

| Line 130: | Line 130: | ||

<pre>gprof ./lzss | gprof2dot | dot | convert - out.png</pre> | <pre>gprof ./lzss | gprof2dot | dot | convert - out.png</pre> | ||

| − | [[File: | + | |

| + | [[File:Lzss_calls.png]] | ||

The call graph demonstrates that insert_node function takes the bulk of work (83.48%). delete_node takes only 10% even though it’s called nearly as many times as insert_node. It is hard to determine if LZSS is a good candidate for GPU optimisation: data throughput can be quite large but the logic of the algorithm includes some branching which can introduce some issues while porting code to CUDA. I suspect that compress function itself will need to be parallized (rather than insert_node). | The call graph demonstrates that insert_node function takes the bulk of work (83.48%). delete_node takes only 10% even though it’s called nearly as many times as insert_node. It is hard to determine if LZSS is a good candidate for GPU optimisation: data throughput can be quite large but the logic of the algorithm includes some branching which can introduce some issues while porting code to CUDA. I suspect that compress function itself will need to be parallized (rather than insert_node). | ||

Revision as of 14:54, 26 February 2018

GPU610/DPS915 | Student List | Group and Project Index | Student Resources | Glossary

Contents

Project Name Goes here

Team Members

- Yalong Li, Pilot

- Olga Belavina, Pilot Lieutenant

Progress

Assignment 1

LZSS

LZSS (Lempel–Ziv–Storer–Szymanski) is a compression algorithm that belongs to LZ77 family. It attempts to replace a string of symbols with a reference to a dictionary location of the same string.

The C implementation includes both compression and un-compression functionalities, however only compression algorithm will be tested and profiled. The source code for LZSS an be found here.

Data Overview

Supplying dummy text file to the compression function may not reflect real-world performance. Meaningful data needs to be used for the compression algorithm bench-marking and profiling since randomly generated text does not follow language rules/patterns (meaning it’s harder to compress such data).

The CIA world fact book was used as our test material. The file itself is only 4 megabytes, so in order to benchmark LZSS for larger size, world fact book text was concatenated to itself (many times) to reach certain size.

| File Name | Size (MB) |

|---|---|

| 1GB.txt | 1071 |

| 500M.txt | 536 |

| 250M.txt | 268 |

| 125M.txt | 134 |

Profiling

Binary was produced (for both debugging and profiling) with gcc command. Code snippet below demonstrates profiling commands used for 250 megabyte file (same logic was applied to other text files). The binary is supplied with the original text file as its first argument and destination file for the compressed output.

[obelavina@localhost gpu610]$ gcc lzss.c -o lzss [obelavina@localhost gpu610]$ ./lzss c 250M.txt 250M.compressed In : 280730901 bytes Out: 149085687 bytes Out/In: 0.531 [obelavina@localhost gpu610]$ gprof -p -b lzss > 250M.flt

GPROF Result

/////////////////// 125MB FILE /////////////////// [obelavina@localhost gpu610]$ cat 125M.flt Flat profile: Each sample counts as 0.01 seconds. % cumulative self self total time seconds seconds calls s/call s/call name 82.23 22.73 22.73 140365467 0.00 0.00 insert_node 11.29 25.85 3.12 140365451 0.00 0.00 delete_node 6.58 27.67 1.82 1 1.82 27.69 compress 0.07 27.69 0.02 1 0.02 0.02 init_tree

/////////////////// 250MB FILE /////////////////// [obelavina@localhost gpu610]$ cat 250M.flt Flat profile: Each sample counts as 0.01 seconds. % cumulative self self total time seconds seconds calls s/call s/call name 83.53 43.49 43.49 280730917 0.00 0.00 insert_node 10.42 48.92 5.43 280730901 0.00 0.00 delete_node 6.08 52.09 3.17 1 3.17 52.13 compress 0.08 52.13 0.04 1 0.04 0.04 init_tree /////////////////// 500MB FILE /////////////////// [obelavina@localhost gpu610]$ cat 500M.flt Flat profile: Each sample counts as 0.01 seconds. % cumulative self self total time seconds seconds calls s/call s/call name 83.61 87.34 87.34 561461817 0.00 0.00 insert_node 10.82 98.64 11.30 561461801 0.00 0.00 delete_node 5.58 104.46 5.83 1 5.83 104.55 compress 0.09 104.55 0.09 1 0.09 0.09 init_tree /////////////////// 1GB FILE /////////////////// [obelavina@localhost gpu610]$ cat 1G.flt Flat profile: Each sample counts as 0.01 seconds. % cumulative self self total time seconds seconds calls s/call s/call name 83.59 175.05 175.05 1122923616 0.00 0.00 insert_node 10.60 197.26 22.21 1122923600 0.00 0.00 delete_node 5.81 209.44 12.18 1 12.18 209.69 compress 0.12 209.69 0.25 1 0.25 0.25 init_tree

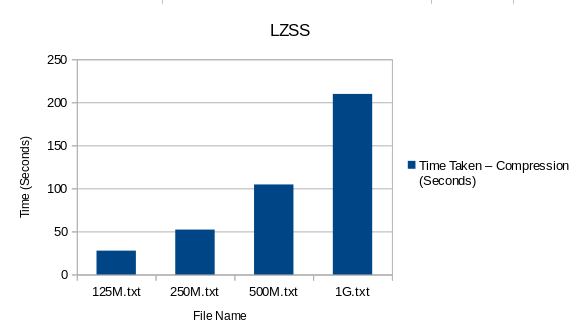

Plotted Result

| File Name | File Size (MB) | Total Time Taken – Compression (Seconds) |

|---|---|---|

| 125M.txt | 134 | 27.69 |

| 250M.txt | 268 | 52.13 |

| 500M.txt | 536 | 104.55 |

| 1G.txt | 1071 | 209.69 |

Analysis

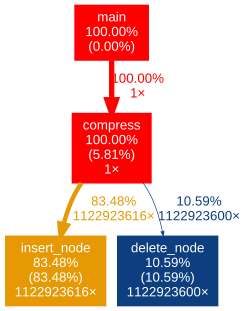

Lets first try to visualise the function calls so it is easier to determine where LZSS algorithm spends most of the time.

gprof ./lzss | gprof2dot | dot | convert - out.png

The call graph demonstrates that insert_node function takes the bulk of work (83.48%). delete_node takes only 10% even though it’s called nearly as many times as insert_node. It is hard to determine if LZSS is a good candidate for GPU optimisation: data throughput can be quite large but the logic of the algorithm includes some branching which can introduce some issues while porting code to CUDA. I suspect that compress function itself will need to be parallized (rather than insert_node).

Resources:

The data source (The Large Corpus): http://corpus.canterbury.ac.nz/descriptions/#cantrbry

LZSS Source Code: http://my.execpc.com/~geezer/code/lzss.c