GPU621/Analyzing False Sharing

Contents

Group Members

Preface

In multicore concurrent programming, if we compare the contention of mutually exclusive locks to "performance killers", then pseudo-sharing is the equivalent of "performance assassins". The difference between a "killer" and an "assassin" is that the killer is visible and we can choose to fight, run, detour, and beg for mercy when we encounter the killer, but the "assassin" is different. The "assassin" is always hiding in the shadows, waiting for an opportunity to give you a fatal blow, which is impossible to prevent. In our concurrent programming, when we encounter lock contention that affects concurrency performance, we can take various measures (such as shortening the critical area, atomic operations, etc.) to improve the performance of the program, but pseudo-sharing is something that we cannot see from the code we write, so we cannot find the problem and cannot solve it. This leads to pseudo-sharing in the "dark", which is a serious drag on concurrency performance, but we can't do anything about it.

What to know before understanding false sharing

CPU cache architecture

The cpu is the heart of the computer and all operations and programs are ultimately executed by him.

The main memory RAM is where the data exists and there are several levels of cache between the CPU and the main memory because even direct access to the main memory is relatively very slow.

If you do the same operation multiple times on a piece of data, it makes sense to load it close to the CPU while executing the operation, for example a loop counter, you don't want to go to the main memory every loop to fetch this data to grow it.

L3 is more common in modern multicore machines, is still larger, slower, and shared by all CPU cores on a single socket. Finally, main memory, which holds all the data for program operation, is larger, slower, and shared by all CPU cores on all slots.

When the CPU performs an operation, it first goes to L1 to find the required data, then L2, then L3, and finally if none of these caches are available, the required data has to go to main memory to get it. The farther it goes, the longer the operation takes. So if you do some very frequent operations, make sure the data is in the L1 cache.

CPU cache line

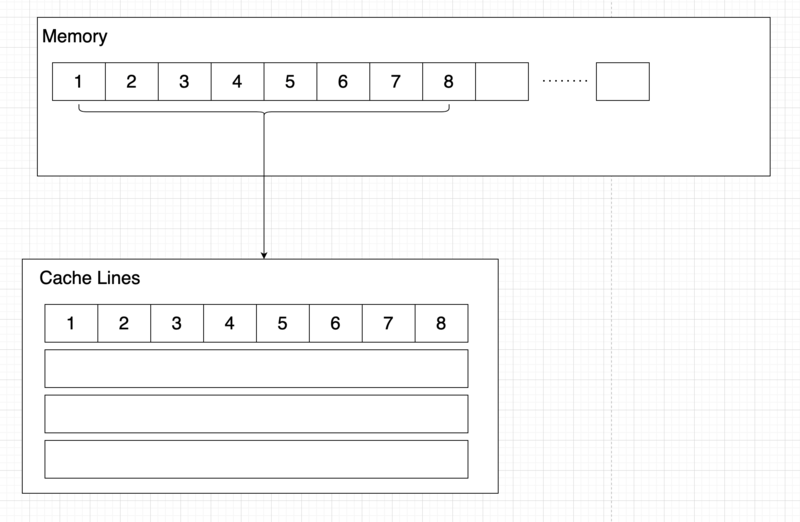

A cache is made up of cache lines, usually 64 bytes (common processors have 64-byte cache lines, older processors have 32-byte cache lines), and it effectively references a block of addresses in main memory.

A C++ double type is 8 bytes, so 8 double variables can be stored in a cache line.

During program runtime, the cache is loaded with 64 consecutive bytes from main memory for each update. Thus, if an array of type double is accessed, when a value in the array is loaded into the cache, the other 7 elements are also loaded into the cache. However, if the items in the data structure used are not adjacent to each other in memory, such as a chain table, then the benefits of cache loading will not be available.