Difference between revisions of "DPS921/Intel Math Kernel Library"

(→Power Point Attached) |

|||

| (15 intermediate revisions by 2 users not shown) | |||

| Line 19: | Line 19: | ||

== Intel Math Library == | == Intel Math Library == | ||

| + | === Introduction === | ||

| + | The Intel oneAPI Math Kernel Library (MKL) is the fastest and most widely used math library for all intel based systems. It helps you reach high performance with its enhanced library of optimized math routines. It allows for faster math processing times, increased performance and shorter development times. The MKL supports seven main features; Linear Algebra, Sparse Linear Algebra, Data Fitting, Fast Fourier Transformations, Random Number Generation, Summary Statistics and Vector Math. These features will allow you to optimize applications for all current and future intel CPUs and GPUs. | ||

=== Features === | === Features === | ||

| Line 29: | Line 31: | ||

Level 3: Matrix-matrix operations | Level 3: Matrix-matrix operations | ||

| + | |||

| + | There are many different implementations of these subprograms available. These different implementations are created with different purposes or platforms in mind. Intel's oneAPI implementation heavily focuses on performance, specifically with x86 and x64 in mind. | ||

| + | |||

| + | [[File:BLAS.png]] | ||

==== Sparse Linear Algebra Functions ==== | ==== Sparse Linear Algebra Functions ==== | ||

Able to perform low-level inspector-executor routines on sparse matrices, such as: | Able to perform low-level inspector-executor routines on sparse matrices, such as: | ||

| − | + | * Multiply sparse matrix with dense vector | |

| + | |||

| + | * Multiply sparse matrix with dense matrix | ||

| − | + | * Solve linear systems with triangular sparse matrices | |

| − | + | * Solve linear systems with general sparse matrices | |

| − | + | A sparse matrix is matrix that is mostly empty, these are common in machine learning applications. Using standard linear algebra functions would lead to poor performance and would require greater amounts of storage. Specially written sparse linear algebra functions have better performance and can better compress matrices to save space. | |

| − | + | [[File:Sparse.png]] | |

==== Fast Fourier Transforms ==== | ==== Fast Fourier Transforms ==== | ||

Enabling technology today such as most digital communications, audio compression, image compression, satellite tv, FFT is at the heart of it. | Enabling technology today such as most digital communications, audio compression, image compression, satellite tv, FFT is at the heart of it. | ||

A fast Fourier transform (FFT) is an algorithm that computes the discrete Fourier transform (DFT) of a sequence, or its inverse (IDFT). | A fast Fourier transform (FFT) is an algorithm that computes the discrete Fourier transform (DFT) of a sequence, or its inverse (IDFT). | ||

| + | |||

| + | [[File:Download.jpg]] | ||

==== Random Number Generator ==== | ==== Random Number Generator ==== | ||

| Line 54: | Line 64: | ||

All RNG routines can be categorized in several different categories. | All RNG routines can be categorized in several different categories. | ||

| − | + | * Engines - hold the state of a generator | |

| − | + | * Transformation classes - holds different types of statistical distribution | |

| − | + | * Generate function - the routine that obtains the random number from the statistical distribution | |

| − | + | * Services - using routines that can modify the state of the engine | |

The generation of numbers is done in 2 steps: | The generation of numbers is done in 2 steps: | ||

| Line 73: | Line 83: | ||

Data Fitting routines use the following workflow to process a task: | Data Fitting routines use the following workflow to process a task: | ||

| − | + | * Create a task or multiple tasks | |

| − | + | * Modify the task parameters | |

| − | + | * Perform a Data Fitting computation | |

| − | + | * Destroy the task or tasks | |

Data Fitting functions: | Data Fitting functions: | ||

| − | + | * Task Creation and Initialization Routines | |

| − | + | * Task Configuration Routines | |

| − | + | * Computational Routines | |

| − | + | * Task Destructors | |

==== Summary Statistics ==== | ==== Summary Statistics ==== | ||

| Line 98: | Line 108: | ||

Summary Statistics calculate: | Summary Statistics calculate: | ||

| − | + | * Raw and central sums/moments up to the fourth order. | |

| − | + | * Variation coefficient. | |

| − | + | * Skewness and excess kurtosis. | |

| − | + | * Minimum and maximum. | |

Additional Features: | Additional Features: | ||

| − | + | * Detect outliers in datasets. | |

| − | |||

| − | |||

| − | + | * Support missing values in datasets. | |

| − | + | * Parameterize correlation matrices. | |

| + | * Compute quantiles for streaming data. | ||

==== Vector Math ==== | ==== Vector Math ==== | ||

| Line 122: | Line 131: | ||

There are two main set of functions for the Vector Math library that the intel MKL uses they are: | There are two main set of functions for the Vector Math library that the intel MKL uses they are: | ||

| − | + | * VM Mathematical Functions Which allows for it to compute values of mathematical functions e.g. sine, cosine, exponential, or logarithm on vectors that are stored in contiguous memory. | |

| − | + | * VM Service Functions are used for showing when catching errors made in the calculations or accuracy. Such as catching error codes or error messages from improper calculations. | |

=== Code Samples === | === Code Samples === | ||

These samples are directly from the Intel Math Kernal Library code examples. | These samples are directly from the Intel Math Kernal Library code examples. | ||

| + | All our code examples were taken from the github intel library located at [https://github.com/oneapi-src/oneAPI-samples One API Github] | ||

| + | ==== Vector Add & MatMul ==== | ||

| + | The two samples we included in our presentation are specifically located at | ||

| + | [https://github.com/oneapi-src/oneAPI-samples/blob/master/DirectProgramming/DPC%2B%2B/DenseLinearAlgebra/matrix_mul/src/matrix_mul_omp.cpp Mat Mul] | ||

| + | and | ||

| + | [https://github.com/oneapi-src/oneAPI-samples/blob/master/DirectProgramming/DPC%2B%2B/DenseLinearAlgebra/vector-add/src/vector-add-usm.cpp Vector-add] | ||

| + | located at the links provided. | ||

| − | ==== | + | === Conclusion === |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | The Intel oneAPI Math Kernel Library is available from the oneAPI base toolkit and it supports programming languages like C, C++, C#, DPC++ and Fortran. These features will help any financial, science or engineering applications run at an optimized level. The MKL is constantly updated on the Intel oneAPI website with lots of examples and tutorials available on their github. If there's any questions, feel free to ask us or refer to the Intel oneAPI website. | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | == Presentation == | |

| − | |||

| − | |||

| − | + | Animated GIF of the Presentation | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | [[File:Intel Math Kernel Library.gif]] | |

| − | |||

| − | + | PDF File: | |

| − | |||

| − | |||

| − | + | [File:https://wiki.cdot.senecacollege.ca/w/imgs/Intel_Math_Kernel_Library.pdf] | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

Latest revision as of 21:44, 13 April 2021

GPU621/DPS921 | Participants | Groups and Projects | Resources | Glossary

Contents

Intel Math Kernel Library

Team Name

Slightly Above Average

Group Members

- Jordan Sie

- James Inkster

- Varshan Nagarajan

Progress

100%/100%

Intel Math Library

Introduction

The Intel oneAPI Math Kernel Library (MKL) is the fastest and most widely used math library for all intel based systems. It helps you reach high performance with its enhanced library of optimized math routines. It allows for faster math processing times, increased performance and shorter development times. The MKL supports seven main features; Linear Algebra, Sparse Linear Algebra, Data Fitting, Fast Fourier Transformations, Random Number Generation, Summary Statistics and Vector Math. These features will allow you to optimize applications for all current and future intel CPUs and GPUs.

Features

Linear Algebra

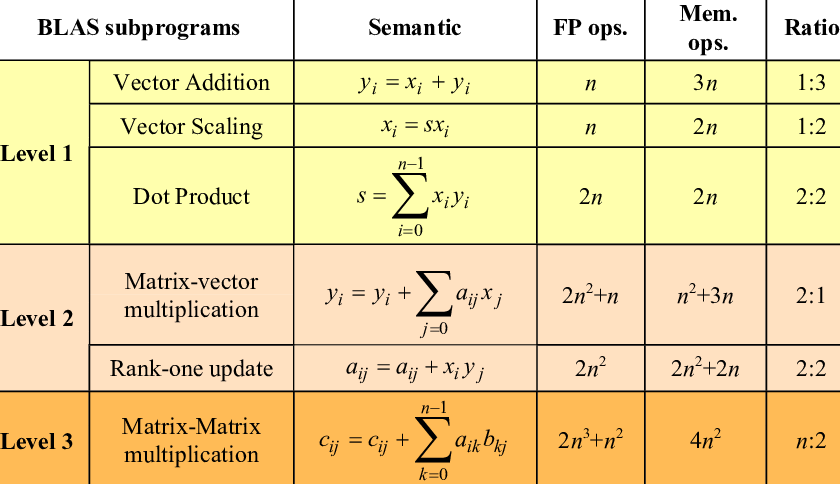

Intel has implemented linear algebra functions that follow industry standards ([BLAS](http://www.netlib.org/blas/) and [LAPACK](http://www.netlib.org/lapack/)). These functions include those that can do the following:

Level 1: Vector-vector operations

Level 2: Matrix-vector operations

Level 3: Matrix-matrix operations

There are many different implementations of these subprograms available. These different implementations are created with different purposes or platforms in mind. Intel's oneAPI implementation heavily focuses on performance, specifically with x86 and x64 in mind.

Sparse Linear Algebra Functions

Able to perform low-level inspector-executor routines on sparse matrices, such as:

- Multiply sparse matrix with dense vector

- Multiply sparse matrix with dense matrix

- Solve linear systems with triangular sparse matrices

- Solve linear systems with general sparse matrices

A sparse matrix is matrix that is mostly empty, these are common in machine learning applications. Using standard linear algebra functions would lead to poor performance and would require greater amounts of storage. Specially written sparse linear algebra functions have better performance and can better compress matrices to save space.

Fast Fourier Transforms

Enabling technology today such as most digital communications, audio compression, image compression, satellite tv, FFT is at the heart of it. A fast Fourier transform (FFT) is an algorithm that computes the discrete Fourier transform (DFT) of a sequence, or its inverse (IDFT).

Random Number Generator

The Intel Math Kernel Library has an interface for RNG routines that use pseudorandom, quasi-random, and non-deterministic generators. These routines are developed the calls to the highly optimized Basic Random Number Generators (BRNGs). All BRNGs differentiate in speeds and properties so its easy to find a optimized one for your application.

All RNG routines can be categorized in several different categories.

- Engines - hold the state of a generator

- Transformation classes - holds different types of statistical distribution

- Generate function - the routine that obtains the random number from the statistical distribution

- Services - using routines that can modify the state of the engine

The generation of numbers is done in 2 steps:

1. generate the state using the engine.

2. iterate over the values and the output is the random numbers.

Data Fitting

The Intel Math Kernal Library provide spline-based interpolation that can be ultilized to approximate functions for derivatives, integrals and cell search operations.

Data Fitting routines use the following workflow to process a task:

- Create a task or multiple tasks

- Modify the task parameters

- Perform a Data Fitting computation

- Destroy the task or tasks

Data Fitting functions:

- Task Creation and Initialization Routines

- Task Configuration Routines

- Computational Routines

- Task Destructors

Summary Statistics

The Intel Math Kernal Library has an interface for Summary Statistics that can compute estimates for single, double and multi-dimensional datasets. For example, such parameters may be precision, dimensions of user data, the matrix of the observations, or shapes of data arrays. First you create and intialize the object for the dataset, then you call the summary statistics routine to calculate the estimate.

Summary Statistics calculate:

- Raw and central sums/moments up to the fourth order.

- Variation coefficient.

- Skewness and excess kurtosis.

- Minimum and maximum.

Additional Features:

- Detect outliers in datasets.

- Support missing values in datasets.

- Parameterize correlation matrices.

- Compute quantiles for streaming data.

Vector Math

There are two main set of functions for the Vector Math library that the intel MKL uses they are:

- VM Mathematical Functions Which allows for it to compute values of mathematical functions e.g. sine, cosine, exponential, or logarithm on vectors that are stored in contiguous memory.

- VM Service Functions are used for showing when catching errors made in the calculations or accuracy. Such as catching error codes or error messages from improper calculations.

Code Samples

These samples are directly from the Intel Math Kernal Library code examples. All our code examples were taken from the github intel library located at One API Github

Vector Add & MatMul

The two samples we included in our presentation are specifically located at Mat Mul and Vector-add located at the links provided.

Conclusion

The Intel oneAPI Math Kernel Library is available from the oneAPI base toolkit and it supports programming languages like C, C++, C#, DPC++ and Fortran. These features will help any financial, science or engineering applications run at an optimized level. The MKL is constantly updated on the Intel oneAPI website with lots of examples and tutorials available on their github. If there's any questions, feel free to ask us or refer to the Intel oneAPI website.

Presentation

Animated GIF of the Presentation

PDF File:

[File:https://wiki.cdot.senecacollege.ca/w/imgs/Intel_Math_Kernel_Library.pdf]